📘 Terraform Series – Day 12

Gujjar Apurv is a passionate DevOps Engineer in the making, dedicated to automating infrastructure, streamlining software delivery, and building scalable cloud-native systems. With hands-on experience in tools like AWS, Docker, Kubernetes, Jenkins, Git, and Linux, he thrives at the intersection of development and operations. Driven by curiosity and continuous learning, Apurv shares insights, tutorials, and real-world solutions from his journey—making complex tech simple and accessible. Whether it's writing YAML, scripting in Python, or deploying on the cloud, he believes in doing it the right way. "Infrastructure is code, but reliability is art."

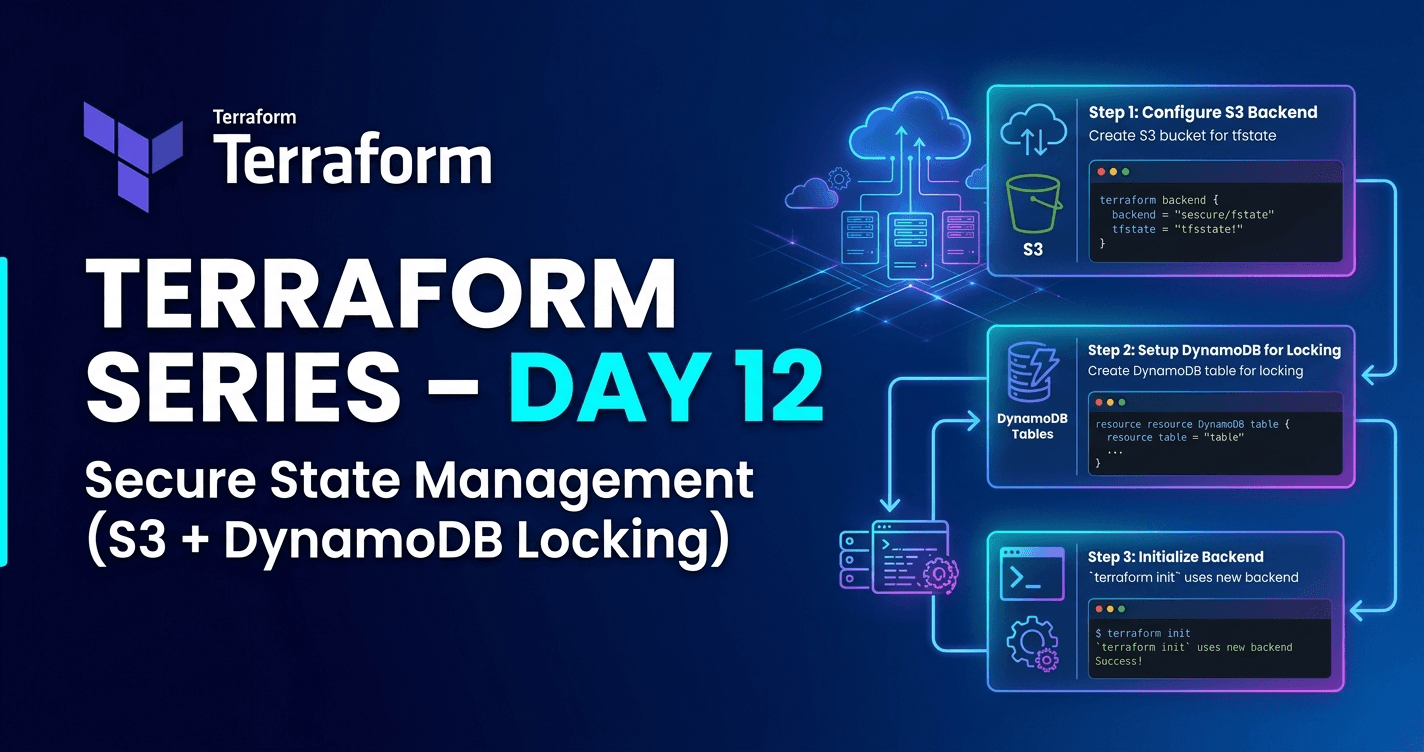

Secure State Management (S3 + DynamoDB Locking)

📝 Abstract

In Terraform, the state file (terraform.tfstate) is the most critical component that connects your configuration with real infrastructure. However, storing it locally can lead to security risks, data loss, and team conflicts.

This blog explains how to securely manage Terraform state using AWS S3 (remote storage) and DynamoDB (state locking), which is the industry-standard approach for production environments.

🎯 Objectives

After completing this blog, you will be able to:

Understand Terraform state and its importance

Know why

.tfstateshould never be pushed to GitHubHandle state loss scenarios

Understand state conflicts in team environments

Implement remote backend using S3 + DynamoDB

Test state locking in real scenarios

🔷 Step 1: What is Terraform State?

Terraform maintains a file:

terraform.tfstate

🧠 This file stores:

Real infrastructure details

Resource IDs and attributes

Mapping between Terraform code ↔ AWS resources

🔷 Step 2: Should You Push

.tfstateto GitHub?

👉 ❌ NO — Never do this

⚠️ Why?

Because it contains:

Secrets (API keys, credentials)

Resource IDs

Internal infrastructure data

👉 This can lead to security breaches

✅ Add to .gitignore

*.tfstate

*.tfstate.backup

🔷 Step 3: What if

.tfstateis Deleted?

👉 Terraform loses tracking of infrastructure

❗ Result:

Terraform thinks → nothing exists

Next

terraform apply→ tries to recreate everything ❌

✅ Solutions:

Restore from backup (

.tfstate.backup)Use remote backend (best practice)

🔷 Step 4: State Conflict (Very Important)

🔹 Scenario:

Developer 1 → runs

terraform applyDeveloper 2 → runs

terraform apply

❗ What Happens?

Both modify same state file

File gets overwritten or corrupted

👉 This is called State Conflict

🔷 Step 5: Solutions

❌ Local Shared State

Not safe

Not scalable

✅ Remote Backend (Best Practice)

Use:

S3 Bucket → Store state file

DynamoDB → Lock state

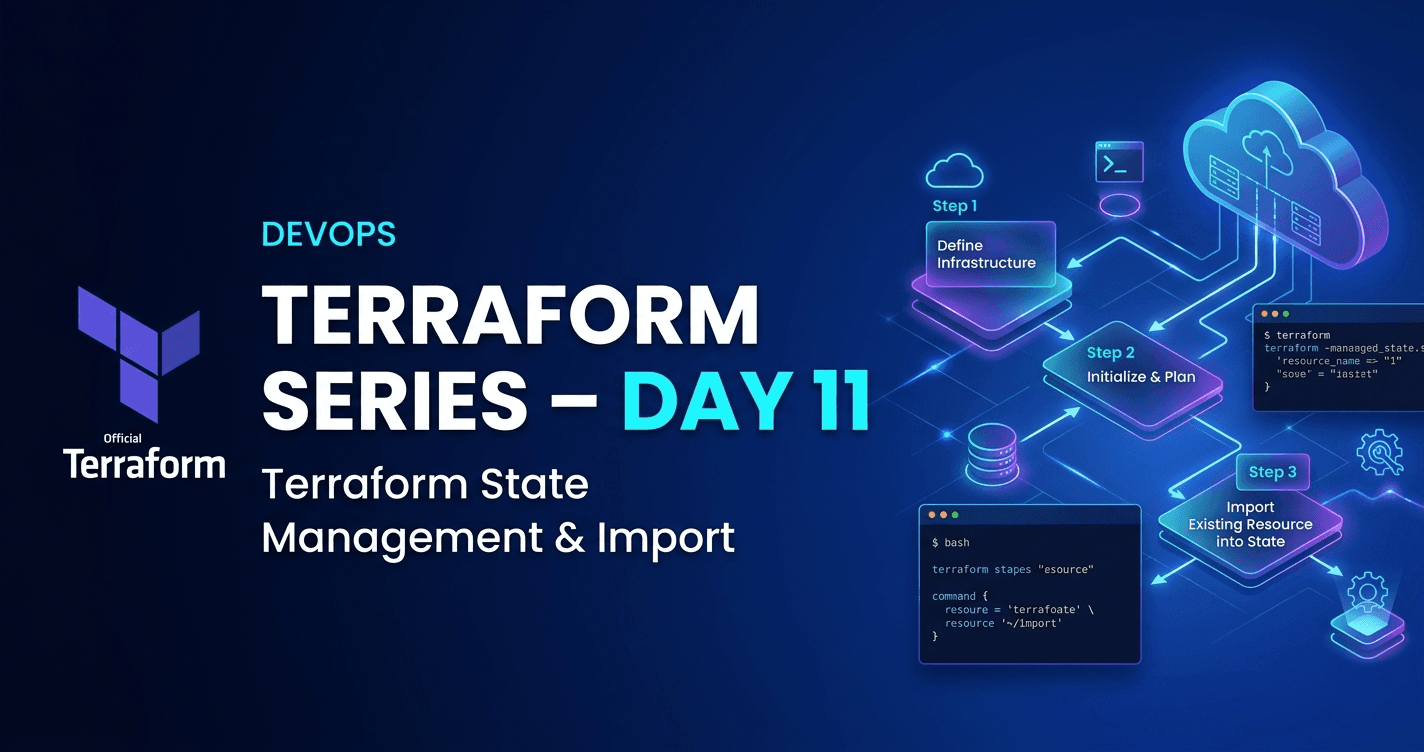

🔷 Step 6: Architecture Flow

🧠 Working:

Terraform stores state in S3

Before update → checks DynamoDB

If no lock → creates

LockIDWhile locked → ❌ no parallel execution

After completion → lock removed

🔷 Step 7: Practical Implementation

📁 Step 1: Create Project Folder

mkdir remote-infra

cd remote-infra

📄 Step 2: Create Files

touch provider.tf terraform.tf s3.tf dynamodb.tf

🔧 Step 3: Provider Configuration

provider "aws" {

region = "us-east-2"

}

📦 Step 4: Terraform Block

terraform {

required_providers {

aws = {

source = "hashicorp/aws"

version = "~> 6.0"

}

}

}

🪣 Step 5: Create S3 Bucket

resource "random_id" "suffix" {

byte_length = 2

}

resource "aws_s3_bucket" "remote_s3" {

bucket = "dev-tf-state-${random_id.suffix.hex}"

tags = {

Name = "tf-state-bucket"

Environment = "dev"

}

}

🔐 Step 6: Create DynamoDB Table

resource "aws_dynamodb_table" "state_lock" {

name = "apurv-table"

billing_mode = "PAY_PER_REQUEST"

hash_key = "LockID"

attribute {

name = "LockID"

type = "S"

}

tags = {

Name = "apurv-table"

Environment = "Dev"

}

}

🔑 Step 7: IAM Permissions

Ensure your AWS user/role has:

S3 Full Access

DynamoDB Full Access

🔷 Step 8: Run Terraform

terraform init

terraform validate

terraform plan

terraform apply

🔷 Step 9: Configure Remote Backend

Now go to your main project folder and update:

terraform {

backend "s3" {

bucket = "bucket<name>"

key = "terraform.tfstate"

region = "us-east-2"

dynamodb_table = "apurv-table"

}

}

🔄 Reinitialize

terraform init

🔷 Step 10: Remove Local State

rm terraform.tfstate*

✅ Verify Remote State

terraform state list

👉 Resources will still appear

✔ Because state is now stored in S3

🔷 Step 11: Test State Locking

Terminal 1:

terraform apply

Terminal 2:

terraform apply

❗ Result:

Terminal 2 → ❌ blocked / waits

Reason → Lock exists in DynamoDB

✔ After Completion:

Lock is removed

Second execution proceeds

After testing all the things you can destroy your resources

🚀 Conclusion

Terraform state is critical for infrastructure tracking

Never store state locally in production

Use S3 for storage + DynamoDB for locking

Prevents:

Data loss

State conflicts

Security risks

👨💻 About the Author

“A complete Terraform series covering everything from fundamentals to advanced real-world infrastructure automation in a DevOps environment.”

📬 Let's Stay Connected

📧 Email: gujjarapurv181@gmail.com

🐙 GitHub: github.com/ApurvGujjar07

💼 LinkedIn: linkedin.com/in/apurv-gujjar